Talend Studio has been a workhorse for data integration teams for over a decade. Its drag-and-drop visual interface made it possible for organizations to build thousands of ETL jobs without writing raw code. But the landscape is shifting. Qlik’s acquisition of Talend, combined with rising cloud-native expectations and the gravitational pull of platforms like Databricks, Snowflake, and dbt, has forced enterprises to reconsider their Talend investments. This guide walks through the architecture, challenges, and automation strategies for migrating Talend Studio jobs to modern cloud-native pipelines.

The Talend Landscape: What’s Changing and Why It Matters

Talend’s product family has evolved considerably over the years. Talend Open Studio provided the free, community-driven foundation. Talend Studio (the commercial version) added enterprise features like parallelism, scheduling, and a centralized repository. Talend Cloud introduced browser-based design and remote execution. And then Qlik acquired the entire stack.

The Qlik acquisition has introduced significant uncertainty. Licensing models are changing, roadmap priorities are shifting toward Qlik’s analytics-first vision, and many enterprises are receiving end-of-support timelines for on-premises Talend Studio installations. For teams with hundreds or thousands of Talend jobs in production, this is not a theoretical concern—it is an operational emergency.

Beyond acquisition politics, there are genuine technical reasons to migrate:

- Java dependency: Every Talend job compiles to Java code. This means managing JVM versions, Java heap tuning, and classpath conflicts across your entire pipeline fleet.

- Performance limits at scale: Talend’s row-by-row processing model struggles with the multi-terabyte datasets that modern analytics demands. Spark-based engines process data in distributed partitions, offering orders-of-magnitude improvement.

- Licensing costs: Enterprise Talend licenses are expensive, and the post-acquisition pricing trajectory is unpredictable.

- Operational isolation: Talend jobs run on dedicated Job Servers, creating infrastructure that sits outside your cloud-native Kubernetes, Airflow, or Databricks orchestration.

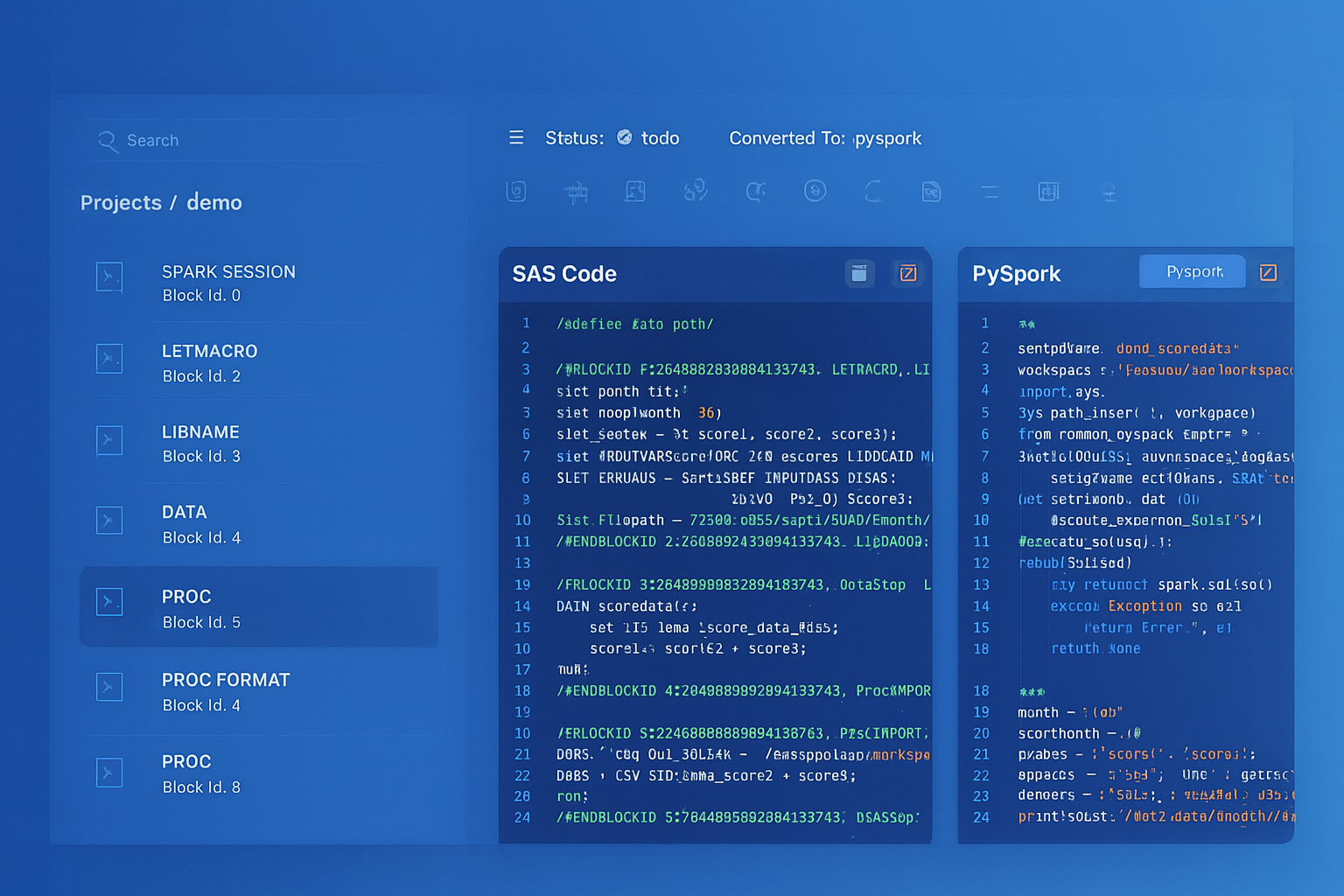

Talend to Apache PySpark migration — automated end-to-end by MigryX

Talend Architecture: What You’re Actually Migrating

Before you can migrate Talend jobs, you need to understand what they contain. Talend stores everything as XML, and the structure is more complex than most teams expect.

Internal Structure

Talend stores jobs in a proprietary XML-based format with complex internal references between components, contexts, and metadata connections. This makes automated parsing significantly more challenging than working with SQL scripts.

MigryX: Purpose-Built Parsers for Every Legacy Technology

MigryX does not rely on generic text matching or regex-based parsing. For every supported legacy technology, MigryX has built a dedicated Abstract Syntax Tree (AST) parser that understands the full grammar and semantics of that platform. This means MigryX captures not just what the code does, but why — understanding implicit behaviors, default settings, and platform-specific quirks that generic tools miss entirely.

Target Platforms: Where Talend Jobs Go Next

There is no single “right” target for Talend migration. The choice depends on your data platform strategy, team skills, and performance requirements. The most common targets include:

- PySpark on Databricks or EMR: Best for large-scale data processing. PySpark DataFrames replace Talend’s row-by-row model with distributed columnar operations.

- Snowpark (Python or Scala): Ideal if Snowflake is your primary data platform. Snowpark pushes computation into Snowflake’s engine, eliminating data movement.

- dbt (SQL-first): For transformation-heavy pipelines where the logic is primarily SQL joins, aggregations, and CASE expressions. dbt models replace Talend’s visual transformations with version-controlled SQL.

- Airflow DAGs: Talend’s job orchestration (parent jobs calling child jobs, conditional execution, error handling) maps naturally to Airflow’s DAG model.

From parsed legacy code to production-ready modern equivalents — MigryX automates the entire conversion pipeline

From Legacy Complexity to Modern Clarity with MigryX

Legacy ETL platforms encode business logic in visual workflows, proprietary XML formats, and platform-specific constructs that are opaque to standard analysis tools. MigryX’s deep parsers crack open these proprietary formats and extract the underlying data transformations, business rules, and data flows. The result is complete transparency into what your legacy code actually does — often revealing undocumented logic that even the original developers had forgotten.

Component Mapping: Talend to Modern Equivalents

The following table maps the most common Talend components to their modern equivalents across PySpark, SQL, and orchestration layers:

| Talend Component | Modern Equivalent | Notes |

|---|---|---|

tMap | DataFrame join + select + filter | Most complex; handles joins, transforms, and routing in one component |

tJoin | DataFrame.join() or SQL JOIN | Inner/outer join modes map directly to PySpark or SQL |

tFileInputDelimited | spark.read.csv() | Schema, delimiter, and header options map directly |

tDBInput | spark.read.jdbc() or SQL source | Connection metadata must be resolved from Talend repository |

tLogRow | Python logging or .show() | Debug output; often removed in production conversions |

tRunJob | Airflow task dependency or Python module call | Parent-child job relationships become orchestration dependencies |

Migration Phases: A Structured Approach

Migrating hundreds of Talend jobs is not a weekend project. A structured, phased approach reduces risk and builds confidence incrementally.

Phase 1: Discovery and Inventory

Parse every Talend job in the repository to build a complete inventory. For each job, extract the component list, context variable references, metadata connections, subjob dependencies, and scheduling information. This inventory becomes the migration backlog and drives prioritization.

Phase 2: Automated Conversion

Use automated tooling to convert Talend job XML into target-platform code. This is where the component mapping table above is applied programmatically. The goal is not 100% perfection on the first pass—it is 80-90% structural accuracy that human reviewers can refine.

Phase 3: Manual Refinement

Certain patterns require human judgment: complex tJavaRow logic with Java library dependencies, custom error-handling flows, and business rules embedded in tMap expressions. Automated conversion provides the scaffold; engineers complete the last mile.

Phase 4: Validation and Parallel Run

Run the original Talend jobs and the converted pipelines side by side against the same input data. Compare outputs row by row, column by column. Automated data validation tools can diff millions of rows and flag discrepancies at the cell level.

Phase 5: Cutover and Decommission

Once validation passes, redirect orchestration to the new pipelines, monitor for a stabilization period, and decommission the Talend infrastructure.

How MigryX Automates Talend Migration

MigryX parses Talend .item and .properties XML files directly—including Git-exported Talend projects. The engine resolves context variable references, metadata connection IDs, and subjob dependencies across the entire repository. Each tMap, tAggregate, tJoin, and tFilterRow is decomposed into its logical operations and reconstructed as PySpark DataFrame code, Snowpark expressions, or dbt SQL models.

For jobs with complex orchestration (conditional triggers, error handlers, parallel subjobs), MigryX generates Airflow DAG definitions that preserve the original execution flow. The result is a complete, runnable migration package—not just translated code, but production-ready pipelines with logging, error handling, and configuration management built in.

Validation Strategy: Proving Equivalence

Migration without validation is just hope. A rigorous validation strategy includes three layers:

- Structural validation: Verify that every Talend job has a corresponding converted pipeline. Check that all components are accounted for and no logic was dropped during conversion.

- Data validation: Run both the original and converted pipelines against identical input datasets. Compare row counts, column checksums, and sample record comparisons. Automated diffing tools can handle tables with millions of rows.

- Behavioral validation: Test edge cases—null handling, empty input files, database connection failures, and malformed data. Talend and PySpark may handle these differently, and the converted code must match the expected behavior.

The most dangerous migrations are the ones that “look right” but silently produce different results for edge cases. Row-level data validation is not optional—it is the only way to build trust in converted pipelines.

Talend migration is a significant undertaking, but it is also an opportunity. The target platforms—PySpark, Snowpark, dbt, Airflow—are not just replacements for Talend. They are foundations for a modern data architecture that scales, integrates with cloud-native tooling, and eliminates the licensing and infrastructure overhead that Talend imposed. The key is to approach the migration with structure, automation, and relentless validation.

Why MigryX Is the Only Platform That Handles This Migration

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Deep AST parsing: MigryX’s custom-built parsers achieve 95% accuracy on every supported legacy technology — not through approximation, but through true semantic understanding.

- Merlin AI augmentation: Where deterministic parsing reaches its limit, Merlin AI resolves ambiguities and implicit behaviors, pushing accuracy to 99%.

- Complete coverage: MigryX supports 25+ source technologies including SAS, Informatica, DataStage, SSIS, Alteryx, Talend, ODI, Teradata, and Oracle PL/SQL.

- End-to-end automation: From parsing to conversion to validation — MigryX automates the entire pipeline, not just one step.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to modernize your legacy code?

See how MigryX automates migration with precision, speed, and trust.

Schedule a Demo